Performance issues even with a 8 Core + 32 gigs.

-

Jay Swaminarayan!

Dear @dan , It's always great to have you as a silver lining.Slightly long story as I am narrating over a week's working on performance. We are from Shree Swaminarayan Gurukul Organization (https://gurukul.org) a non-profit school-chain in India, moving almost whole schooling online during this pendamic situation. However, the following is our usecase & looking forward to know what are we missing in optimizing the performance.

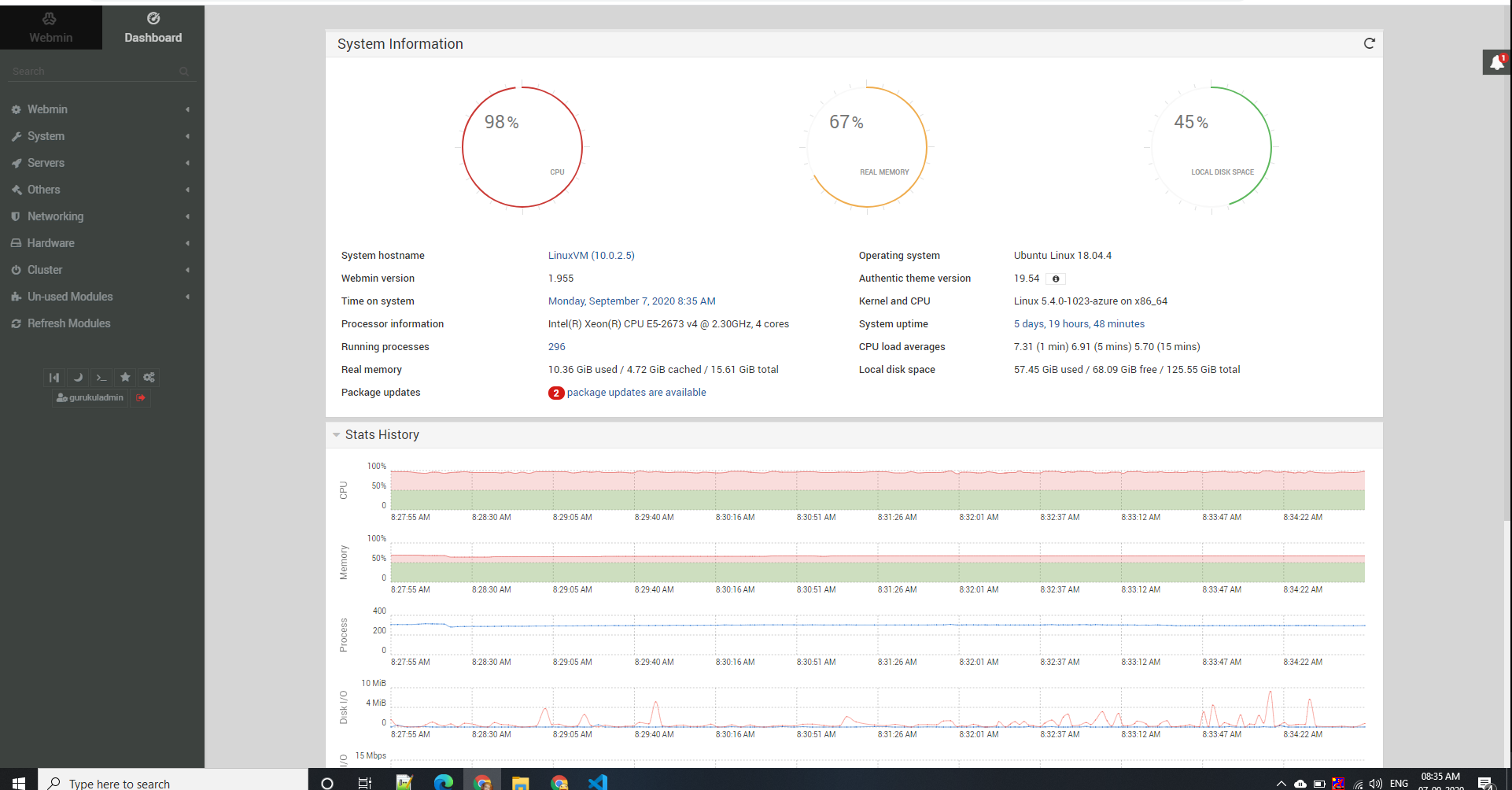

Server Config

- Azure VM: Ubuntu Linux 18.04.4

- Linux 5.4.0-1023-azure on x86_64

- CPU: Intel(R) Xeon(R) CPU E5-2673 v4 @ 2.30GHz, 8 cores

- Memory: 31.36 GiB total

- Storage: 156.92 GiB total (SSD)

- Running 1 Wordpress site, multiple angular sites, .netcore small services

- FA installed on :9011 and reverse proxied from Apache2 Host

Use Case & Timeline:

- Intent: Scheduled online tests from 7th Sep (today) to 14th Sep, planned a month back as students are not coming to school due to CORONA.

- Users: Serve logins to 4000 - 5000 students for an external app (android app, backend not on the same server) and 7000 is the max users we have for this case

- Launch: 1st Sep - App Integration finishes to handle login flow with CODE Grant & Offline Access, using AppAuth-Android

- Onboarding Students on App: 1st & 2nd Sep - Distribution of Credentials and Tutorials for Students

- Trail#1: 3rd Sep - Planned Trail Test #1 for Testing Load

- Optimization#1: Resized Server from 2 Cores + 16gb to 4 Cores + 16gb

- Trail#2: 5th Sep - Planned Trail Test #2 for Testing Load

- Optimization#2: Optimized mpm_event apache2

- Real Exam: 7th Sep - Real Exam Day, still halted.

- Optimization#3: 7th Sep noon - Resized Server from 4 Cores + 16gb to 8 Cores + 32gb

- Optimization#4: 7th Sep mid day - PBKDF2 (f=24000) to SHA-256 (f=2)

- Seeking Support: Writing this topic for help.

Happenings:

- During Launch itself, the server was overloaded with requests, and FA-SSO was not served to almost all users

- We were almost not sure what went wrong and just rebooted the VM, simultaneously students waiting even up to 30 mins for the server to load dropped the hope for the day

- Thus, we initially thought there must have been some blocking process and REBOOT would do each time there is a load.

- On 3rd Sep trial #1, once again there was a flood, this day we already rebooted the machine before the test time.

- However, this did not solve the problem and we resized VM, Optimization #1

- We were anticipating, we are well to go for 5th Sep, but same issues, the request flooded, it is then we actually started researching on the matrices and with some local help found mpm_event is the culprit and had insufficient server limits set. After which we were almost confident about the load and declared that the issue is resolved completely to all school principals & students.

- We were happy that this Trail-strategy actually benefited us to avoid any issues on the real day, i.e. today. Also, we checked out https://fusionauth.io/blog/2019/02/26/got-users-100-million and were assured about Good to Go on 7th, today.

- Today, to our surprise yet again we faced the same issues, and students were not served FA-SSO. As this was happening, we firstly resized the VM again (Optimization #2), Parellaly we started digging deep into issues, FA was not actually in the our hit-list.

- Still had the same issue. We observed load on Java & learned hashing could be a bottleneck thus changed PBKDF2 (f=24000) could be bottleneck and changed it to SHA-256 (f=2) with rehashing on login.

- And also we quickly decided to divide users into different time slots to manage the load, and somehow finished the day, with many students failed to attempt, we will see that later.

- Taking things really seriously, we started checking each process and to our surprise we found FA (JAVA) loading the VM even for small users, at least far smaller than 10M that too, time slotted.

Server Footprints:

1.

Here is the load test we tried later after applying all optimizations that we could think of Server Upgrade CPU + RAM, least cryptography SHA-256 (factor = 2), this is the footprint for 2000 clients load distributed across 1 min, you can see server HALTS after 15 secs.

https://bit.ly/33aarYkHere is the load test of login API load for 3500 clients load distributed across 1 min, taking whooping 14 secs to serve.

https://bit.ly/2GwRbN2

End of the Story,

NEED HELP!

PS: For tomorrow we have divided the students in slots even till the evening. However, day after tomorrow we intend to take the test at a time.

Thanking you and hoping for the best.

-

Hi,

Sorry to hear load testing is causing problems. I'm pretty sure I don't have all the information needed to diagnose this, but the server size looks adequate. Every load test is different, so all I can do is provide suggestions for further investigation.

What version of FusionAuth are you running? What actions are you taking (it looks like just login, is that correct)? Where is your database running? Are you using the elasticsearch search engine?

Have you identified which component is causing issues? It could be the database, apache, or FusionAuth, so I'd try to identify which one is the issue. Can you remove apache from the equation and login to fusionauth directly? Can you monitor your database and see if there are any hung processes or slow queries? Are there any errors in the FusionAuth logs during the load tests? How about errors in the apache logs?

Have you ensured the load test is a good representation of what will be happening in the real world (does it replicate the usage you expect or have seen in the past)? Do you expect 2000 users to sign in within one minute?

Please let me know if the above questions point to any other areas of investigation that you undertake.

By the way, not to if this is mission critical software, we highly recommend signing up for a support plan, which will guarantee support response time and provide access to the engineering team via support tickets. More here.

-

@sswami have you made any progress? Just wanted to check in.

-

Thank you for your response, We could tryout something but its not improving performance

Somethings you Asked

- Fusionauth version 1.17.0

- Actions:

- We are just using FA for Login & token exchange tasks,

- However the during load test we only used SSO.

- SSO: Only loading login page, Get request oauth2/authorize?client_id=

{}&response_type=code&redirect_uri=%2Flogin&state={} - We combined API: /oauth2/login for 1 load test, (later I have mentioned when).

- Database is running under the same machine.

- Yes we are using elastic search.

- We observed

- The process usage of Mysql it was using average 5% of CPU

- Disk IOPS was around 70 (plenty of room was available for more IOs),

- apache was also stable around 2-3% of CPU usage. During that time it was quickly serving other requests.

- There were no errors in apache.

We found error in fusionauth

java.lang.OutOfMemoryError: Java heap space at java.base/java.lang.reflect.Method.copy(Method.java:158) at java.base/java.lang.reflect.ReflectAccess.copyMethod(ReflectAccess.java:102) at java.base/jdk.internal.reflect.ReflectionFactory.copyMethod(ReflectionFactory.java:308) at java.base/java.lang.Class.getDeclaredMethod(Class.java:2555) at com.google.inject.internal.cglib.proxy.$Enhancer.getCallbacksSetter(Enhancer.java:809) at com.google.inject.internal.cglib.proxy.$Enhancer.setCallbacksHelper(Enhancer.java:797) at com.google.inject.internal.cglib.proxy.$Enhancer.setThreadCallbacks(Enhancer.java:791) at com.google.inject.internal.cglib.proxy.$Enhancer.registerCallbacks(Enhancer.java:760) at com.google.inject.internal.ProxyFactory$ProxyConstructor.newInstance(ProxyFactory.java:269) at com.google.inject.internal.ConstructorInjector.provision(ConstructorInjector.java:114) at com.google.inject.internal.ConstructorInjector.construct(ConstructorInjector.java:91) at com.google.inject.internal.ConstructorBindingImpl$Factory.get(ConstructorBindingImpl.java:306) at com.google.inject.internal.SingleParameterInjector.inject(SingleParameterInjector.java:42) at com.google.inject.internal.SingleParameterInjector.getAll(SingleParameterInjector.java:65) at com.google.inject.internal.ConstructorInjector.provision(ConstructorInjector.java:113) at com.google.inject.internal.ConstructorInjector.construct(ConstructorInjector.java:91) at com.google.inject.internal.ConstructorBindingImpl$Factory.get(ConstructorBindingImpl.java:306) at com.google.inject.internal.FactoryProxy.get(FactoryProxy.java:62) at com.google.inject.internal.SingleParameterInjector.inject(SingleParameterInjector.java:42) at com.google.inject.internal.SingleParameterInjector.getAll(SingleParameterInjector.java:65) at com.google.inject.internal.ConstructorInjector.provision(ConstructorInjector.java:113) at com.google.inject.internal.ConstructorInjector.construct(ConstructorInjector.java:91) at com.google.inject.internal.ConstructorBindingImpl$Factory.get(ConstructorBindingImpl.java:306) at com.google.inject.internal.FactoryProxy.get(FactoryProxy.java:62) at com.google.inject.internal.SingleParameterInjector.inject(SingleParameterInjector.java:42) at com.google.inject.internal.SingleParameterInjector.getAll(SingleParameterInjector.java:65) at com.google.inject.internal.ConstructorInjector.provision(ConstructorInjector.java:113) at com.google.inject.internal.ConstructorInjector.construct(ConstructorInjector.java:91) at com.google.inject.internal.ConstructorBindingImpl$Factory.get(ConstructorBindingImpl.java:306) at com.google.inject.internal.FactoryProxy.get(FactoryProxy.java:62) at com.google.inject.internal.SingleParameterInjector.inject(SingleParameterInjector.java:42) at com.google.inject.internal.SingleParameterInjector.getAll(SingleParameterInjector.java:65)- Thus we increased the memory footprint to 2GiB at fusionauth config file (fusionauth-app.memory property).

- We ran the test again.

- It was able to serve 3000 requests in a minute.

- Test report https://bit.ly/3icn8rW.

- But the CPU usage was more than 50% for fusionauth java process & Like 7-11% for FA Elastic Search.

Loadtest?

- Simply loading a GET SSO request with a login page was consuming half of the CPUs i.e. 4 cores without even login.

- With Login API combine, 1500 login across 1 min what we are able to serve with >50% cpu usage. https://bit.ly/35jESOp

- Going beyond that response time was increasing. https://bit.ly/3m8Nhum

- During the test we also reduced the PBKDF2 factor to 4000 from 20000. So that crypto is out of equation for bottleneck. We also tried with SHA256 with only 2 factor, confirming the crypto processing. The results were the same.

- And there are peak times where we expect such load. During all the above tests db and apache were stable.

Is this the performance we can expect from fusionauth with our CPU and memory config? Turning around 40TPS at max? Certainly there is something we are missing here. Please guide us.

Hoping...

-

Seems like you are doing all the right things.

@sswami said in Performance issues even with a 8 Core + 32 gigs.:

java.lang.OutOfMemoryError: Java heap space

at java.base/java.lang.reflect.Method.copy(Method.java:158)If you have 32GB of memory, can you increase the amount available to FusionAuth beyond 2GB? Try 8 or 16. That should help with the heap space issue.

Can you try that and let me know if there are new errors in the FusionAuth log file?

-

@dan Surely... I shall try and revert.

However, Can I know one thing? What performance should we be expecting with this VM, like can you just give me approx. range? Or what best have you achieved as test load, how does that translate to our machine?

-

Sorry, I don't understand: What do you mean by "I shall try and revert."?

I'm not aware of any load tests on this type of VM, but I'll ask the team and get back to you.

-

Word from the team is we haven't load tested on a VM recently.

-

Hello @dan !

Any update on the Load test? Can you suggest what should be our server config & resources to handle 7000+ login requests at a time? We are still facing this issue? The fusionauth-app (https://login.gurukul.org/oauth2/authorize) Java Process consumes 100% CPU when more than around 1000 users tries to login in at a time.

Also, as we never had and are not having much regular concerns, we have not opted for support package. This issue if solved will the max we would need support actually.

Thank you...

-

Java Process consumes 100% CPU when more than around 1000 users tries to login in at a time

ItsONLY a max of 20 clients per second and 1000 users over a min with an average response time of 10secs!

We have checked all possible things what we could hunt over the internet. But with our limited knowledge, we are unable to solve this.

-

Did you try changing the memory allocated to FusionAuth, as I mentioned here: https://fusionauth.io/community/forum/topic/370/performance-issues-even-with-a-8-core-32-gigs/5

If you don't have enough memory and there are a large number of

OutOfMemoryErrors, that could be causing the symptoms you are seeing. -

Oh! Yes, we have tried that much earlier, sorry didn't tell you... we are out of Memory Heap... Right now its taking 100% CPU.

-

If your system is cpu bound then you need to scale this horizontally. Each node will only be able to hash n passwords per second. Once you reach this limit, the only way to perform more hashes per second is to reduce the hash complexity or add more nodes. Reducing the has complexity is not recommend as it makes these hashes easier to brute force.

It is clear that you have a lot of knowledge of your environment and have invested a lot of time into this solution. We have many large scale production instances, I'm confident FusionAuth can scale to your requirements. However as I'm sure you're aware performance tuning is not a simple equation.

There are a lot of possible variables here. The best way for you to get this tuned and ready for production is to purchase support so you can get engineering support.

-

@robotdan Thank you very much for your reply... Well, this is 1 time but although Please let me know where to purchase for the support and a direct link to the suited package shall be appreciated.

Moreover,

- Why is just rendering the SSO page taking so long?, Password hashing is far story...

- We have completely reduced crypto to Factor=2 with SHA-256 but still, an 8core CPU is reaching 100% for about 25-30 TPUs

- We are trying the "creating nodes" way.

- Also locally trying to profile FusionAuth Process Stack.

- Also, please favour us by telling on a High level / Approximation if there is nothing running on the VM and it's only to load FusionAuth SSO, what should be the best Performance expected. I agree, there must be some configs, threads, workers into the equation. But If you were to optimize all those, what would you achieve on an approximation. This will help us understand if its the Limitation by the server resources (CPU/RAM/NODES) or its simply some misconfiguration somewhere.

-

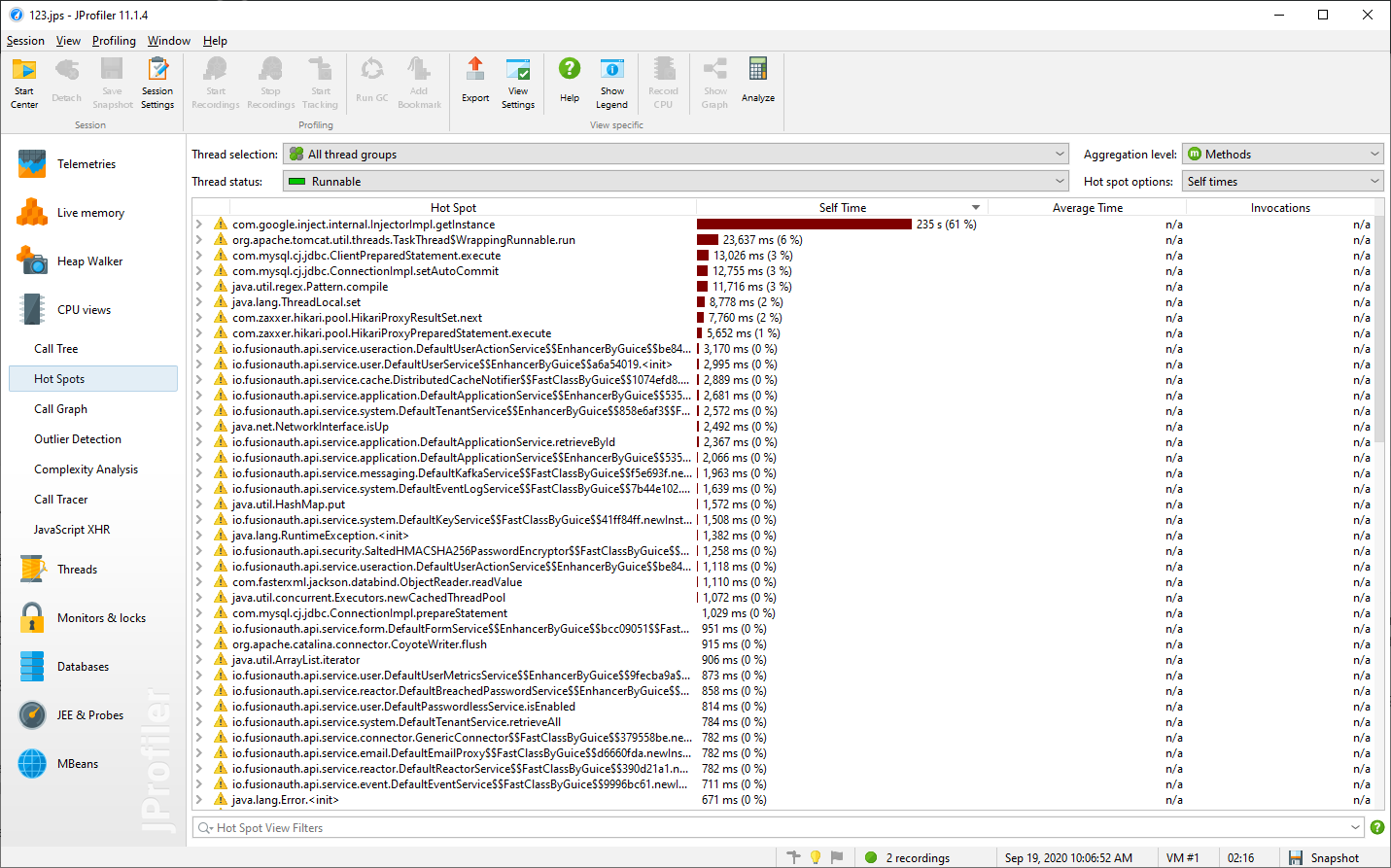

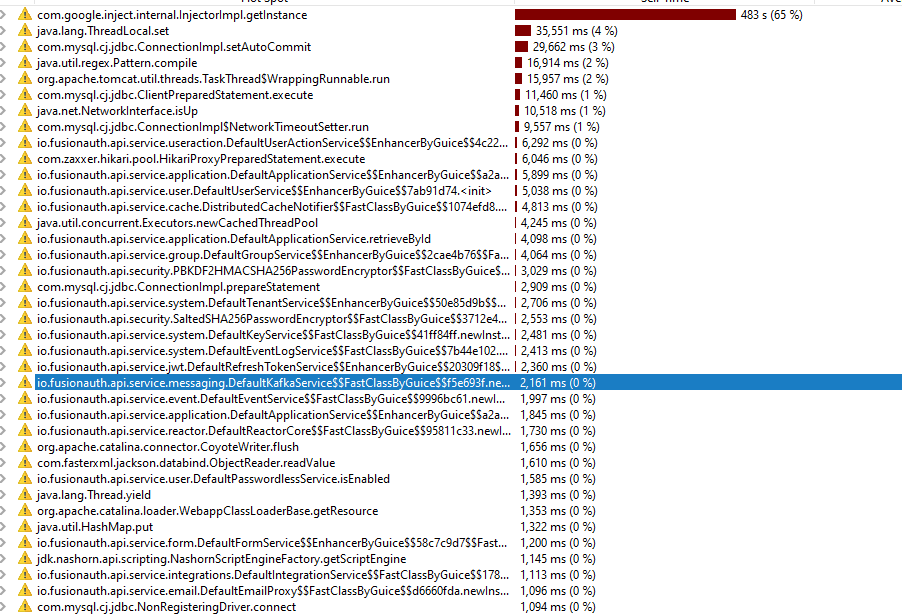

We performed load testing using jMeter tool in a small machine to do CPU Sampling profiling. Configuration core i5 4 core 3.6Ghz, 16GB RAM. To Fusionauth server 8GB was allocated. DB Mysql 8.0.21. Fresh DB setup, no user except admin.

Performance testing scenario

25 users, each user loading 100 times login page http:localhost:9011/oauth2/authorize?client_id=30d6e7be-407d-..Results

With Fusionauth Server 1.17.0 - 25 page/sec

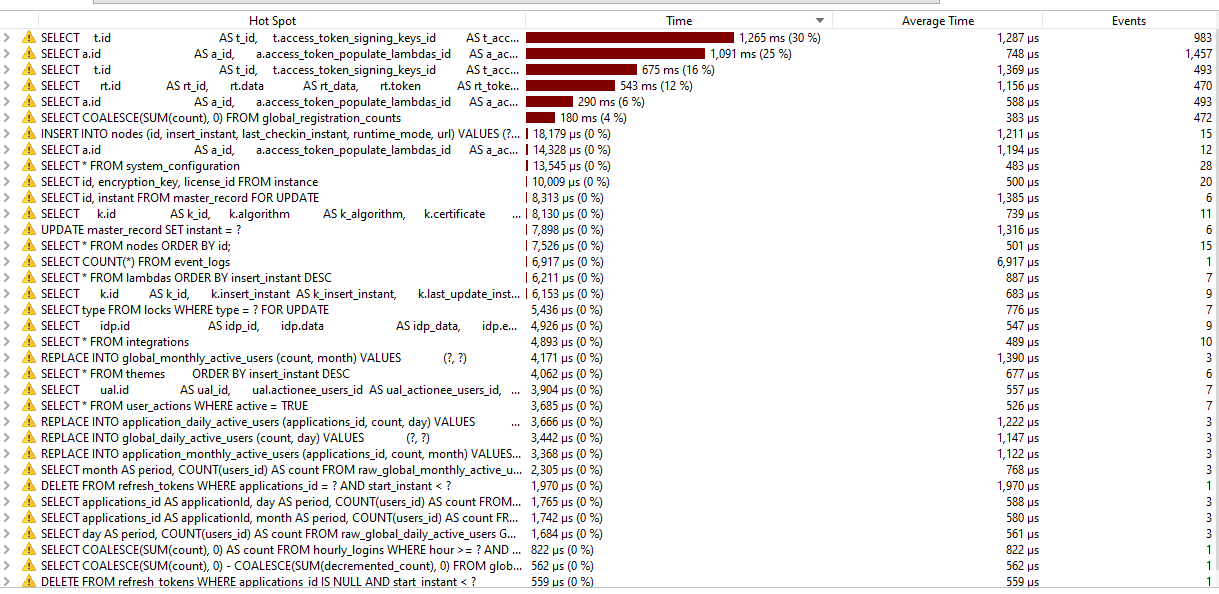

With old Fusionauth Server 1.6.0 - 112 page/secOn 1.17.0 Server we did cpu sampling and observer this.

Seems that google library is causing the issue. While older server was performing better.

Moreover I could no upgrade to new version 1.19.2 from 1.17.0

Below is the exception log

-- Update the version

UPDATE version

SET version = '1.19.0';

. Cause: java.sql.SQLSyntaxErrorException: You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'DELIMITER $$

CREATE FUNCTION generate_id()

RETURNS BINARY(16)

NOT DETERMI' at line 1

at org.apache.ibatis.jdbc.ScriptRunner.executeFullScript(ScriptRunner.java:133)

at org.apache.ibatis.jdbc.ScriptRunner.runScript(ScriptRunner.java:108)

at com.inversoft.maintenance.db.SQLExecutor.executeSQLScriptWithError(SQLExecutor.java:43)

at com.inversoft.maintenance.db.JDBCMaintenanceModeDatabaseService.upgrade(JDBCMaintenanceModeDatabaseService.java:337)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1130)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:630)

... 1 common frames omitted

Caused by: java.sql.SQLSyntaxErrorException: You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'DELIMITER $$

CREATE FUNCTION generate_id()

RETURNS BINARY(16)

NOT DETERMI' at line 1

at com.mysql.cj.jdbc.exceptions.SQLError.createSQLException(SQLError.java:120)

at com.mysql.cj.jdbc.exceptions.SQLError.createSQLException(SQLError.java:97)

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:122)

at com.mysql.cj.jdbc.StatementImpl.executeInternal(StatementImpl.java:764)

at com.mysql.cj.jdbc.StatementImpl.execute(StatementImpl.java:648)

at org.apache.ibatis.jdbc.ScriptRunner.executeStatement(ScriptRunner.java:236)

at org.apache.ibatis.jdbc.ScriptRunner.executeFullScript(ScriptRunner.java:128)

... 7 common frames omittedLooking at the exception, the migration script of 1.19.0 has syntex error "DELIMITER $$" in Mysql 8.0.21.

-

Hiya,

You can purchase a support plan here: https://fusionauth.io/pricing (you'll want either 'enterprise' or 'premium' as the 'developer' plan doesn't include support).

I think the bug you are seeing around the SQL delimiter was fixed in 1.19.5, so if you want to upgrade to 1.19.x, I'd recommend upgrading to at least 1.19.5. (The bug is here: https://github.com/FusionAuth/fusionauth-issues/issues/859 but in the comments on https://github.com/FusionAuth/fusionauth-issues/issues/862 Daniel mentions removing that code.)

-

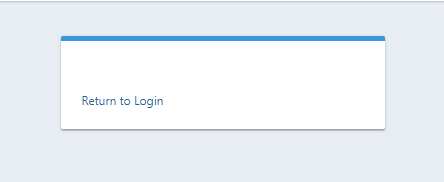

Thank you,

After upgrading to 1.19.6, there was no error in db migraion with mysql 8.x. But I was unable to load the fusionauth dashboard. After successfull login it shows like

Then I alter the applications table -> FusionAuth. I changed the data column

From

{"data": {}, "jwtConfiguration": {"enabled": false, "timeToLiveInSeconds": 0, "refreshTokenTimeToLiveInMinutes": 0}, "loginConfiguration": {"allowTokenRefresh": false, "generateRefreshTokens": false, "requireAuthentication": true}, "oauthConfiguration": {"clientId": "3c219e58-ed0e-4b18-ad48-f4f92793ae32", "logoutURL": "/", "clientSecret": "Zj...", "enabledGrants": ["authorization_code"], "generateRefreshTokens": true, "authorizedRedirectURLs": ["/login"], "requireClientAuthentication": true}, "verifyRegistration": false, "samlv2Configuration": {"debug": false, "enabled": false, "xmlSignatureC14nMethod": "exclusive_with_comments"}, "passwordlessConfiguration": {"enabled": false}, "registrationConfiguration": {"type": "basic", "enabled": false, "fullName": {"enabled": false, "required": false}, "lastName": {"enabled": false, "required": false}, "birthDate": {"enabled": false, "required": false}, "firstName": {"enabled": false, "required": false}, "middleName": {"enabled": false, "required": false}, "loginIdType": "email", "mobilePhone": {"enabled": false, "required": false}, "confirmPassword": false}, "authenticationTokenConfiguration": {"enabled": false}}To

{"jwtConfiguration": {"enabled": true, "timeToLiveInSeconds": 60, "refreshTokenExpirationPolicy": "SlidingWindow", "refreshTokenTimeToLiveInMinutes": 60, "refreshTokenUsagePolicy": "Reusable"},"registrationConfiguration": {"type":"basic"}, "oauthConfiguration": {"authorizedRedirectURLs": ["/login"], "clientId": "3c219e58-ed0e-4b18-ad48-f4f92793ae32", "clientSecret": "Zm...=", "enabledGrants": ["authorization_code"], "logoutURL": "/", "generateRefreshTokens": true, "requireClientAuthentication": true},"loginConfiguration": {"allowTokenRefresh": false, "generateRefreshTokens": false, "requireAuthentication": true}}

Then it started loading.

For now this migration issue is fixed.

But the performance hostspot still exists with the latest version just loading login page.

DB was performing well, the average execution was around 1ms

-

Hiya,

Based on this message:

Please let me know where to purchase for the support and a direct link to the suited package shall be appreciated.

I believe you were planning to purchase support? If so, please open a support ticket and reference this forum post.

Otherwise I'd be interested to hear how your horizontal scaling efforts are going? And also you might consider switching the F class of Azure VMs, as those appear from the docs to be better suited for CPU intensive operations: https://azure.microsoft.com/en-us/blog/f-series-vm-size/

-

We have already implemented horizontal scaling. Currently 4 nodes are running. So for now users are able to login.

I would be great if you can fix the google getInstance issue fix in upcoming releases.

Morover running migration 1.19.0 over large data set, Below migration was generating duplicate ids in refresh_tokens table

UPDATE refresh_tokens

SET id = SUBSTR(CONCAT(MD5(RAND()), MD5(RAND())), 3, 16)

WHERE id IS NULL;No of rows where 60,000 and out of which 422 duplicate where generated. So Migration was failing. For now I have removed duplicate records, for those users new refresh token will be generated during login.

You may fix this issue, other customers may face this problem.

-

Thanks for letting us know about the UK violations, I would have expected

SUBSTR(CONCAT(MD5(RAND()), MD5(RAND())), 3, 16)to generated a unique value. We'll have to do some testing.