Multiple nodes sharing IP address?

-

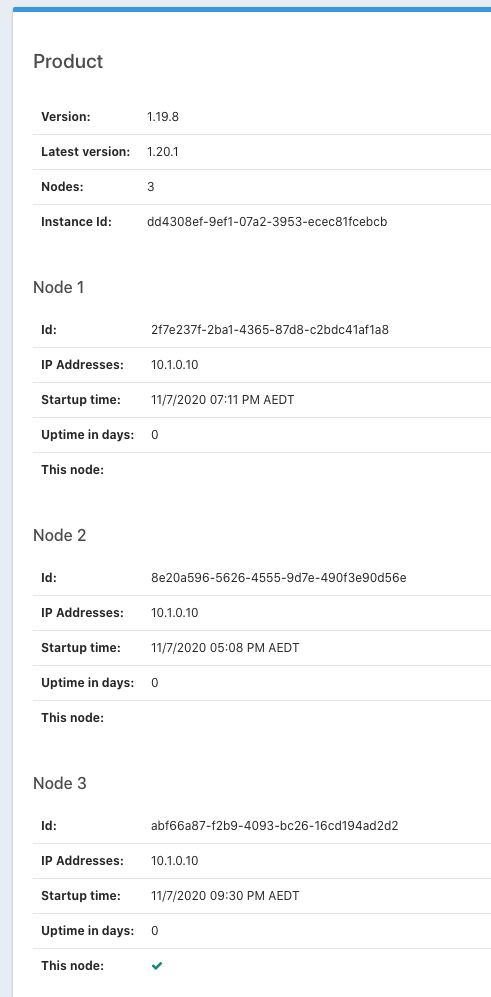

On the About screen we have indications that all our nodes are sharing the same IP address. The nodes seem to be restarting at odd times too, unrelated to system load. The start times shown here are Saturday evening and we had zero login activity until my 9:30pm login to generate the screen capture.

Is any of this expected?

-

That seems weird to me.

Are these running in k8s or on separate servers?

-

Ugh, somehow I wasn't watching my own thread.

Yes this is deployed on Kubernetes.

-

@dan do you have any ideas on next step to help figure out what is happening here?

-

@davidmw Hmmm.

What is the value of

fusionauth-app.url/FUSIONAUTH_APP_URLfor these nodes and how is it set (env var, shared config.properties file)? I'm guessing since you are running in docker, you are setting it, if at all, via env vars.From the configuration reference, this is:

The FusionAuth App URL that is used to communicate with other FusionAuth nodes. This value is defaulted if not specified to use a localhost address or a site local if available. Unless you have multiple FusionAuth nodes the generated value should always work. You may need to manually specify this value if you have multiple FusionAuth nodes and the only way the nodes can communicate is on a public network.

https://fusionauth.io/docs/v1/tech/reference/configuration/

How is your network configuration set up? How can the nodes reach each other (not super familiar with k8s).

-

@dan said in Multiple nodes sharing IP address?:

FUSIONAUTH_APP_URL

@dan

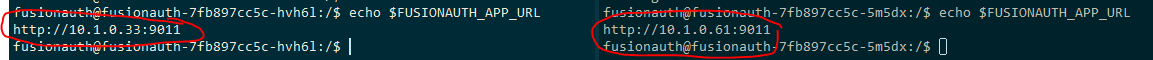

We have configured fusion to run with 2 replicas. So it spins up 2 pods all the times in 2 different nodes.

We are settingFUSIONAUTH_APP_URLashttp://POD_IP:9011. So it's different for both pods where fusionauth is running. Attached screenshot of both pods env variable.

We don't have any security policies so within the cluster all nodes can access each other.

We are still seeing the same IP address for both nodes in fusion UI as shown in screenshot provided by @davidmw

Please let me know if you need anything else from us to troubleshoot this issue further.

-

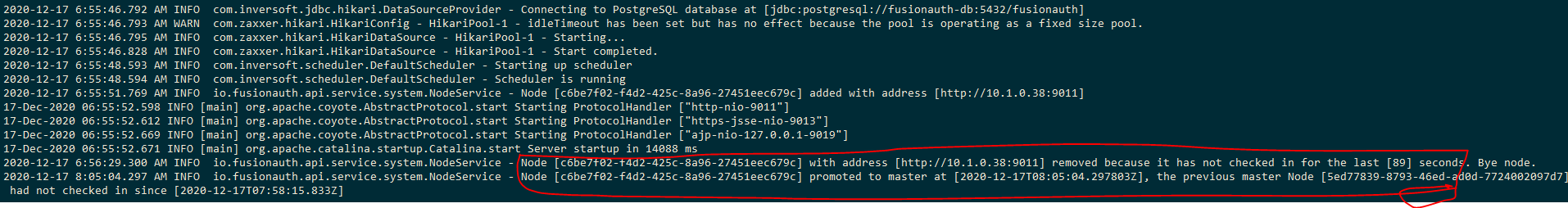

I'm looking through some things and it appears that when a node is added, there's a log message.

Node [...] added with address [...]That should be in the output of your fusionauth instances at startup. Can you see these lines? What values do they have?

Thanks!

-

Hello @dan

Yes i do see those statements in the logs. Below are the statements for 2 nodes. They do have different Node Id and ip address.

io.fusionauth.api.service.system.NodeService - Node [c5ae863b-1e86-4858-8516-3dfc93866c04] added with address [http://10.1.0.39:9011]io.fusionauth.api.service.system.NodeService - Node [4af1d532-79a7-4a5c-b45a-4e1e7338a1fb] added with address [http://10.1.0.53:9011] -

Thanks!

This looks like a UI bug. I don't believe this is related to the instability mentioned in the first post in this thread.

Here's the bug I filed: https://github.com/FusionAuth/fusionauth-issues/issues/1030

Thanks for answering my questions!

-

Okay so if we refocus on the apparent instability of the nodes - how can we check on node startup times to see if the data reported in the About UI is accurate?

-

@davidmw said in Multiple nodes sharing IP address?:

Okay so if we refocus on the apparent instability of the nodes - how can we check on node startup times to see if the data reported in the About UI is accurate?

I'm not sure how to answer that question, probably because I don't know your environment very well.

Doesn't k8s record the node startup time when you deploy something? Or can you manually restart a node at a known time and see how that compares to what fusionauth reports?

What am I missing?

By the way, if you are running in FusionAuth in production, we strongly encourage you to get a support contract

. Having one allows access to the engineering team via opening support tickets. https://fusionauth.io/pricing/ Chasing this kind of bug down is something they're quite good at.

. Having one allows access to the engineering team via opening support tickets. https://fusionauth.io/pricing/ Chasing this kind of bug down is something they're quite good at. -

We do see node start times in the logs. But can't see the reason for the node restart. Everyday fusion nodes(pods) are being restarted.

Trying to understand the highlighted portion from the logs. Due to some reason

NodeServiceis removingnode. The node was just started and within a minute it was removed. So it may not be due to health of the node.

Can't find much information about setting up fusionauth multi-node cluster. Can you share the links for such documentation.

-

I think that you got some answers over in github: https://github.com/FusionAuth/fusionauth-issues/issues/373#issuecomment-749759257

I don't have any timelines for multinode documentation; in our experience it "just works". But I'll put it on the list :).

-

I wrote a guide for running fusionauth in a clustered/multi node setup: https://fusionauth.io/docs/v1/tech/installation-guide/cluster/

The bug about the ip addresses being the same (which was only a display bug, not a functionality bug) was also addressed in 1.23.0: https://fusionauth.io/docs/v1/tech/release-notes/#version-1-23-0